This section lists all blog posts, regardless of topic.

The layers of an AIJuly 9, 2008

An AI, in the classical sense, has different layers, and each of those layers represents an area of study with its own data structure and algorithms.

Core layerThe core of the AI is the data structure in which it represents its understanding of the world. Paired with this are a set of algorithms that allow basic operations to be performed on the data structure, and building on top of that are algorithms to use the data structure to evaluate whether an idea "makes sense", to answer questions via reasoning, etc.

Language in layerBeyond this core is the need to interface with language as an input. This is a substantially different problem and requires new data structures and algorithms, but this layer has a strong dependency on the core layer to evaluate whether a possible interpretation of a phrase makes sense, and ultimately to store the resultant understanding.

Language out layerAI doesn't just need to accept language as an input, it also needs to use language as an output medium.

Auditory in layerTranslating the spoken word into a textual representation.

Auditory out layerTranslating text into the spoken word.

Social layerAny social agent in this world needs more than intelligence and language abilities: It needs an understanding of how to behave appropriately. For example, having a conversation is quite a complex interaction, with many unwritten rules.

Vision layerCreating a mental model of a spacial environment via image analysis.

Personality layerPerhaps least important, but still of interest, is the personality layer, perhaps mostly for its relationship with the social layer. How does an AI add color to its personality? Is this something that happens implicitly, or does it represent another layer of complexity that needs to be developed?

My areas of interestI'm personally the most interested in the

core and

language in layers.

Representing relationships with nodesJuly 9, 2008

Representing relationships with nodesJuly 9, 2008

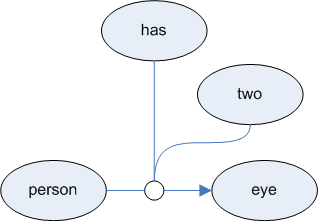

To allow us to attach properties to instances of relationships, relationships must themselves have a node.

In the above diagram, the white circle in between (person) and (eye) is itself a node, and so we're back to being able to represent a relationship with a simple directed graph.

We can represent this notationally like this:

Complex relationshipsHow could we model "has zero or more"? One possibility:

This could be represented textually as:

Challanges with has_aJuly 9, 2008

Challanges with has_aJuly 9, 2008

The difficulty with the

has_a relationship becomes apparent when you try to represent the fact that a person has two eyes. The following doesn't do the trick, and raises the question: Does "has_a" imply that an entity has just

one of the specified thing?

In this instance, we can be clever and say:

(left_eye) (is_a) (eye)

(right_eye) (is_a) (eye)

(person) (has_a) (left_eye)

(person) (has_a) (right_eye)

|

|

But this approach doesn't work in the general case.

The

has_a relationship actually represents a couple related ideas:

1. | | has(n), where n is a number from 0 to infinity |

|

|

| has(0) means "has none of"

has(1) means "has one of"

has(2) means "has two of"

etc. |

2. | | has(n+), where n is a number from 0 to infinity |

|

|

| has(0+) means "has zero or more of"

has(1+) means "has one or more of"

has(2+) means "has two or more of"

etc. |

This gives us a lot more flexibility. For example:

| (person) (has(0+)) (sister) |

|

If you're uncomfortable with the fact that "eye" and "sister" aren't plural here, you need not worry: That is a carry over from the English language which isn't necessary to encode the bare meaning.

Although the use of

has(n) and

has(n+) does the trick in these cases, they represent a strict departure from one of our primary goals, which is to be able to represent knowledge with little more than a directed graph. This example underscores how tempting it is, even early on in the design process of an AI's knowledge representation, to abandon a directed graph representation.

A problem highlighted here is how we deal with relationships that are parameterized. Is there a way to represent parameterized relationships using a graph? In a sense, we already have, since every ternary relationship has one parameter: The relationship itself. (Remember that the fundamental relationship is the binary relationship which simply says that there is a relationship between two things but doesn't specify what that relationship is)

What is emerging here is that a relationship probably isn't best represented using just an edge of a graph. Instead, each instance of a relationship needs to be represented by its own node in the graph. This allows us to attach properties to the relationship itself.

Watered down has_aAnother strategy is to water down the

has_a relationship to mean "has zero or more of". The problem with this is that it doesn't tell us a whole lot. The advantage is that it maintains the goal of using a directed graph.

Specific but limited has_aA third strategy is to allow more precision but in a limited way by using specific relationships rather than generalized ones:

| has_1 |

| has_2 |

| has_3 |

| has_4 |

| ... |

| has_0+ |

This is probably the weakest long term solution, but there is perhaps some merit in adopting its simplicity for the sake not abandoning our directed graph mandate while allowing for forward progress.

Complex "has"What is being hinted at here is that we need to represent the

has relationship with its own node and then attach properties to it.

older >>